The computer infrastructure behind the Large Hadron Collider

CERN’s Large Hadron Collider (LHC) will be producing roughly 15 petabytes of data each year (15,000,000,000,000,000 bytes). In other words, the LHC is not only huge in physical size (filling a 17-mile long underground path), but it will produce enormous amounts of data for researchers to bite into.

CERN seems to be well-equipped to handle the data from the gigantic particle accelerator when you take a look at their data center.

A benefit of open source software is the ability to take the code base of an application and develop it in a new direction. This is, as most of you probably know, called forking, and is very common in the open source community. For example, many Linux distributions can be traced back to either Debian, Fedora or Slackware.

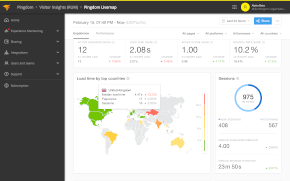

A benefit of open source software is the ability to take the code base of an application and develop it in a new direction. This is, as most of you probably know, called forking, and is very common in the open source community. For example, many Linux distributions can be traced back to either Debian, Fedora or Slackware. Every day brings a new set of outages on the Internet. Websites go down, online services run into trouble, networks have glitches, and so on. When a lot of users are affected, these outages make the news and set the blogosphere abuzz. We here at Pingdom work with downtime-related issues every day and probably spend more time reading about these things than most, so we decided to sum up the year so far for your convenience, and add some analysis of our own in the process.

Every day brings a new set of outages on the Internet. Websites go down, online services run into trouble, networks have glitches, and so on. When a lot of users are affected, these outages make the news and set the blogosphere abuzz. We here at Pingdom work with downtime-related issues every day and probably spend more time reading about these things than most, so we decided to sum up the year so far for your convenience, and add some analysis of our own in the process.

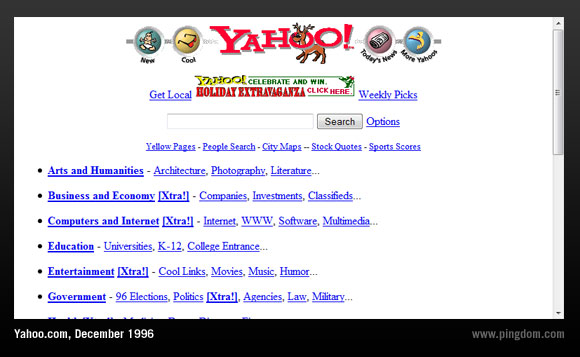

By the end of the nineties the Web had risen to become a huge factor in the world economy, and we were at the height of the dot-com bubble. Billion-dollar acquisitions of Web companies were not uncommon.

By the end of the nineties the Web had risen to become a huge factor in the world economy, and we were at the height of the dot-com bubble. Billion-dollar acquisitions of Web companies were not uncommon.