Sluggish performance

We have too many copies of data because we make too many copies. Simply put, copy data is the creation of copy after copy of documents, files and other electronic data as a result of multiple silo systems such as disaster recovery, business continuity, test, development and backups all operating independently of each other. This is a big reason why companies routinely face data storage issues and experience problems related to backup, restores and overall network performance.

By 2016, spending on storage for copy data will approach $50 billion and copy data capacity will exceed 315 million terabytes.

Multiple copies of the same data can waste storage space and make accessing or restoring mission-critical data challenging. Networks get bogged down by copy, store, move and restore functions, which forces mission critical applications to endure the adverse effects of sluggish performance.

Just one full copy of the data

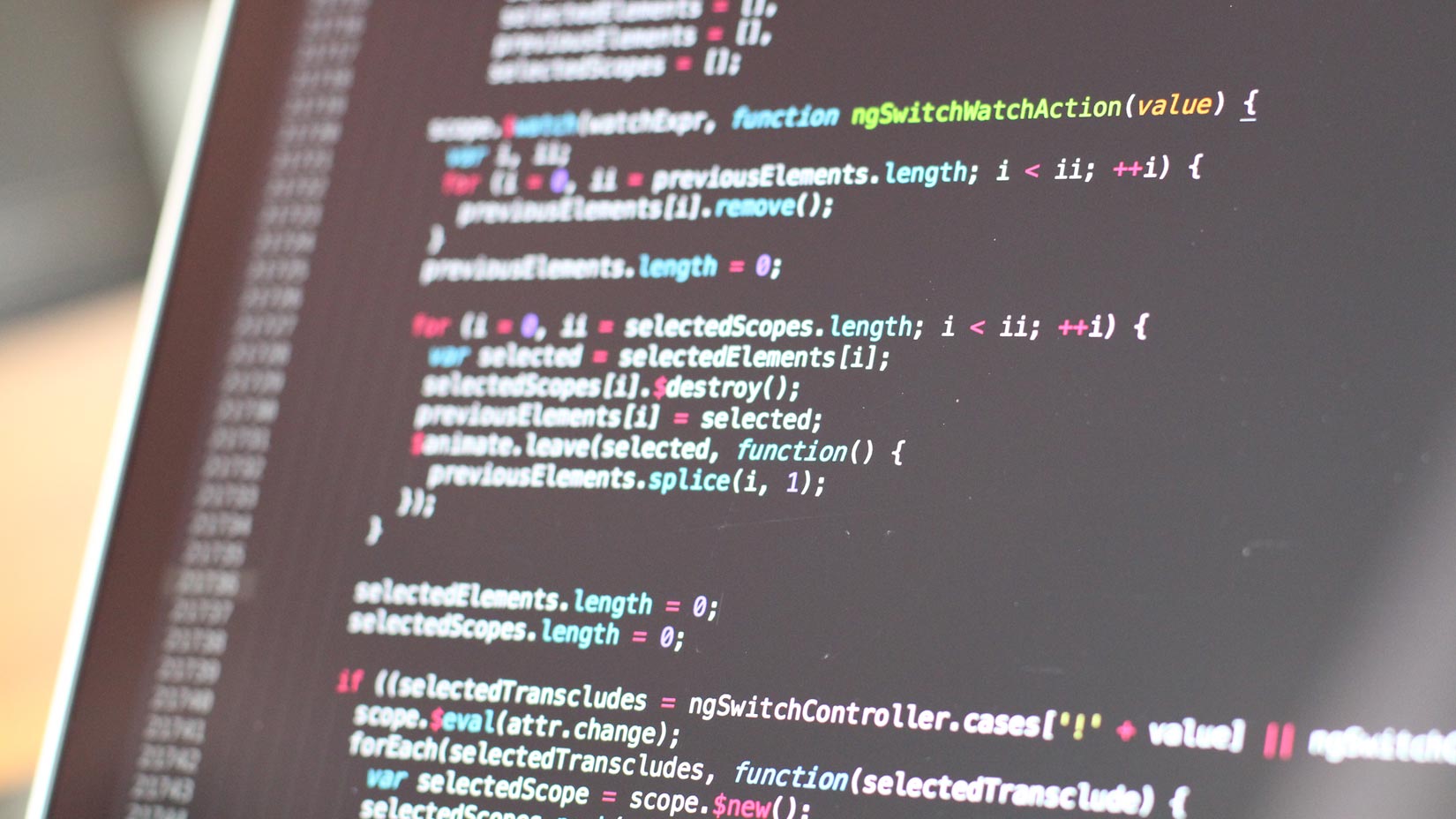

Why maintain multiple tools that function independently with overlapping data and redundant information? You can eliminate the unnecessary duplication of production data by relying on the Copy Data Management (CDM) approach. The data can be used on an as-needed basis. Then, a development, analytics or test environment can be based on an exact replica of the organization’s production data without unnecessarily consuming storage space.

A company might back up its primary data to a backup server, then restore a portion of that data to a lab server that is to be used for testing or development purposes. That data set is being stored three times. CDM seeks to reduce the number of copies to two – the primary data and the backup copy. When unique changes are made to the production environment, the CDM software creates and stores a snapshot (a set of reference markers) of incremental changes at the block level. In reality, no additional copies of the data are ever created. Instead, the snapshot mechanism creates a read/write-differencing disk with a parent-child relationship to the backup copy without creating an entire new copy.

There are two main types of storage snapshot. A copy-on-write snapshot utility creates a snapshot of changes to stored data every time new data is entered or existing data is updated. This allows rapid recovery. A split-mirror snapshot utility references all the data on a set of mirrored drives. This makes it possible to access data offline. However, this is a slower process, and it requires more storage space for each snapshot.

The benefits

This is how CDM differs from traditional backup:

- Reducing the number of full copies reduces the chance of server sprawl

- Valuable storage space isn’t taken up with unnecessary copies of data

- You don’t have to worry about someone accidentally modifying the contents of the backup

And here’s the top 10 evaluation criteria for copy data management & data virtualization, according to Kyle Hailey, who says:

“The average enterprise creates 8-10 copies of every production data source for application development, QA, user acceptance, production support, reporting, and backup. Thus, a 5 TB production database creates 40-50 TB of down stream copies, and a F500 firm might have more than 1,000 production databases generating petabytes of copy data.”

Reducing storage consumption

Some refer to this technology as copy data virtualization or a virtual lab. In any case, the technology reduces storage consumption while making the data easier to use. And it’s becoming much more common. How many copies of data are you storing?