Some of you may have noticed that over the past 4 days, we have experienced some issues and downtime to areas of our service, which, we know is frustrating. In the spirit of transparency, we’d like to share the good, the bad and the ugly of what went down (pun intended), how we fixed it and how we’ve tried to future-proof it going forward.

We’d like to apologize for the outages and subsequent disruption to our service and whilst some issues took a while to fix, we’d like to assure you that a) we worked as fast as possible to resolve the issue and b) to ensure that similar incidents are avoided in the future.

The Outages

Note: All times referenced below are Coordinated Universal Time (UTC).

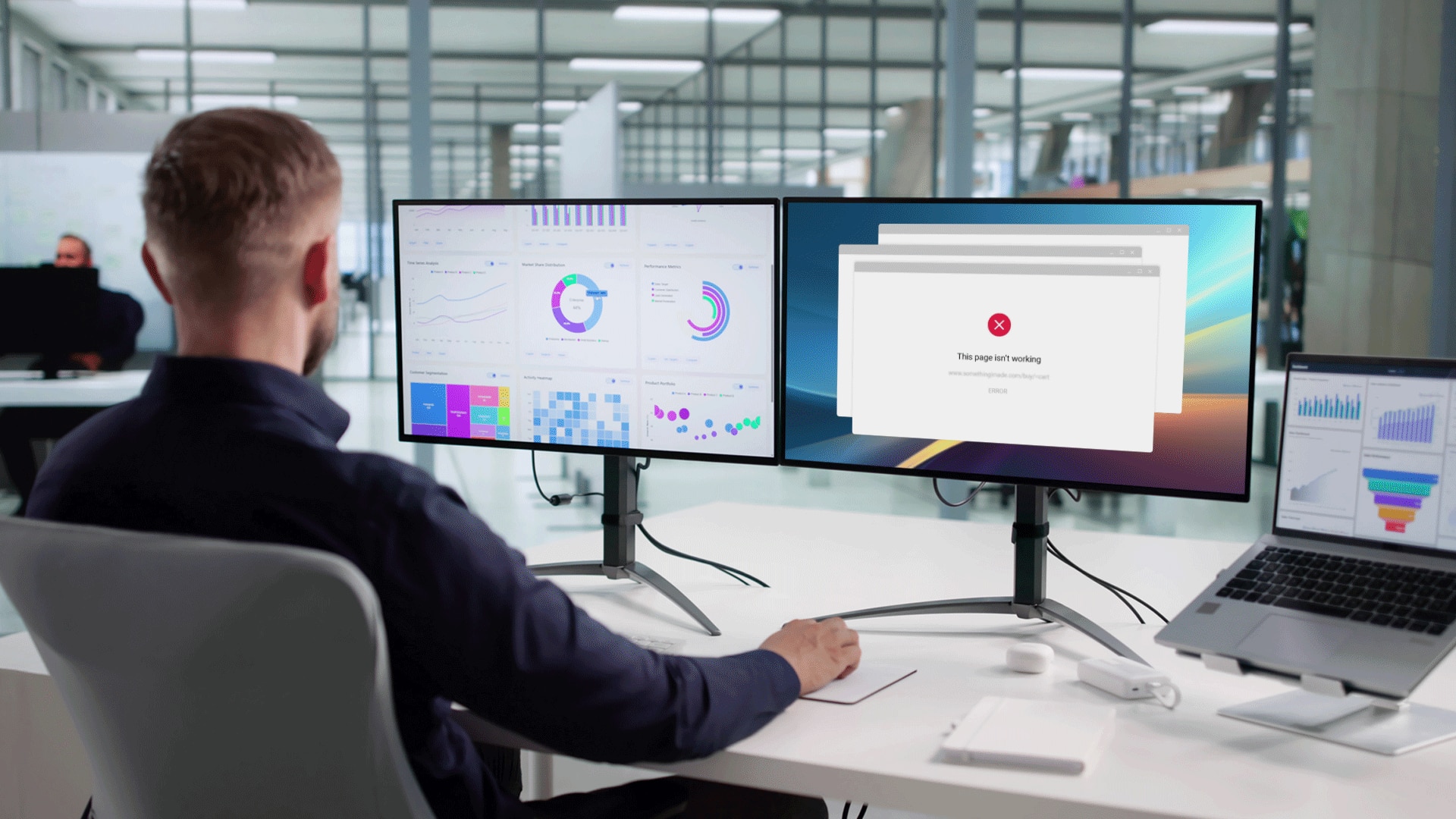

My Pingdom portal outage

- Our dashboard My Pingdom was unavailable from Thursday July 7th 2016 15:23 – 15:55, for 32 minutes.

- Alerting and monitoring of our customer’s checks was not affected but access was limited due to a failure with one of our load balancers.

- Pingdom Ops fixed the issue with load balancer and full service was restored.

We reinstalled the load balancers with new software which should prevent this type of incident from occurring in the future. Additional monitoring has been set up to catch any degradation before it affects customers.

Performance degradation of My Pingdom

- Occurred on Friday July 8th 2016 07:18 – 16:28

- Our database overloaded as a table grew 10 times bigger. As a result, customers experienced a general slowdown in My Pingdom.

- Pingdom Ops resolved the issue at 16:28 when the database was cleaned up properly.

This was our biggest outage, and although My Pingdom was available, the speed at which it was loading for our customers was unacceptable for us. The issue stemmed from a malformed SQL query that duplicated all rows in a table a couple of times. In our resolution to this issue, we tried several solutions (that failed), including migrating the database to different servers. In the end we decided to simply purge the affected rows from the database. Due to the size of our database, pinpointing the source of the problem was quite time consuming and added to the duration of the downtime.

Checklists and reports unavailable

- Occurred on Monday July 11th 12:11 – 13:05

- A configuration error caused reports and checks not to load in My Pingdom and all customers were affected. This did not affect monitoring or alerting in any way either, but loading data in my Pingdom was impossible.

We’ll put our hands up and say that most of the errors that led to these incidents were man-made: we done goofed. With that, when you have humans in the mix, there is always a risk that something will fall over and create an incident. However, we have learned from this (as we do from every incident) and we’re learning to be extra thorough in our checks – more aggressive testing could have caught the database overload sooner but due to the nature of the bug it might still have been difficult to detect during testing – the database grew slowly in the beginning, so the problem was not imminent.