The future is already here

The Internet has profoundly evolved over the last 20 years. Yet the workhorse HTTP (in use since 1999) hasn’t.

Until now.

HTTP/2 is the future and it’s already here. The standard has just been finalized and major browsers are beginning to support it. The focus of HTTP/2 is on performance, specifically end-user perceived latency, network and server resource usage.

Check out this cool demo of HTTP/2’s impact on your download. Multiplexing is really great as you can see in this comparison.

The primary goals for HTTP/2 are to

- Reduce latency by enabling full request and response multiplexing

- Minimize protocol overhead via efficient compression of HTTP header fields

- Add support for request prioritization and server push

Or, why (some) yesterday’s best-practices are today’s HTTP/2 anti-patterns.

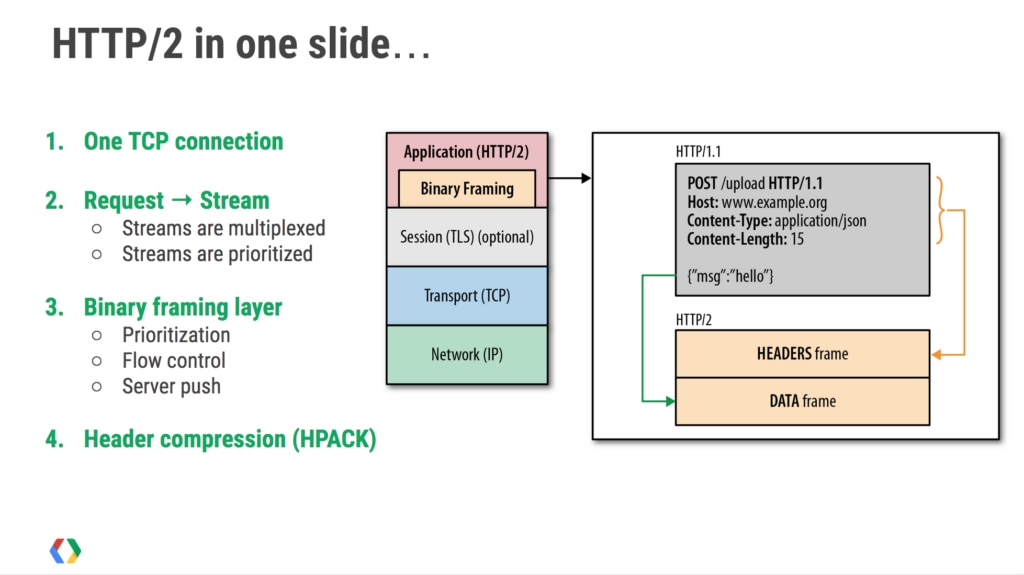

A single connection

One main goal is to allow the use of a single connection from browsers to a web site. At a high level, HTTP/2 is binary, instead of textual, and fully multiplexed instead of blocking. Therefore it can use one connection for parallelism and header compression to reduce overhead. HTTP/2 allows servers to push responses proactively into client caches.

HTTP/2 is comprised of two specifications: Hypertext Transfer Protocol version 2 – RFC7540 and HPACK – Header Compression for HTTP/2 – RFC7541. The IESG has formally approved the HTTP/2 and HPACK specifications and they’re on their way to the RFC Editor where they’ll soon be assigned RFC numbers, go through some editorial processes and be published.

As you probably know, the web performance community’s mantra is “avoid HTTP requests” because HTTP/1 make them expensive. With HTTP/2, spriting, inlining, concatention and such techniques should no longer be necessary (in some cases they may actually cause sub-optimizations). The browser relies on the server to deliver the response data in an optimal way. It’s not just the number of bytes, or requests per second, but the order in which bytes are delivered.

Some of the sweet HTTP/2 benefits are:

- Multiplexing and concurrency: Several requests can be sent in rapid succession on the same TCP connection, and responses can be received out of order – eliminating the need for multiple connections between the client and the server

- Stream dependencies: The client can indicate to the server which of the resources are more important than the others

- Header compression: HTTP header size is drastically reduced

- Server push: The server can send resources the client has not yet requested

A single HTTP/2 connection can contain multiple concurrent open streams, with either endpoint interleaving frames from multiple streams. Streams can be established and used unilaterally or shared by either the client or server and they can be closed by either endpoint. The order in which frames are sent within a stream is significant. Recipients process frames in the order they are received. Multiplexing the streams means that packages from many streams are mixed over the same connection. Two (or more) individual threads of data are made into a single one and then split up again on the other side.

Optimizing web application delivery

How about making applications faster, simpler and more robust? HTTP/2 will make it all happen by allowing you to undo many of the HTTP/1.1 workarounds previously done within applications and address these concerns within the transport layer.

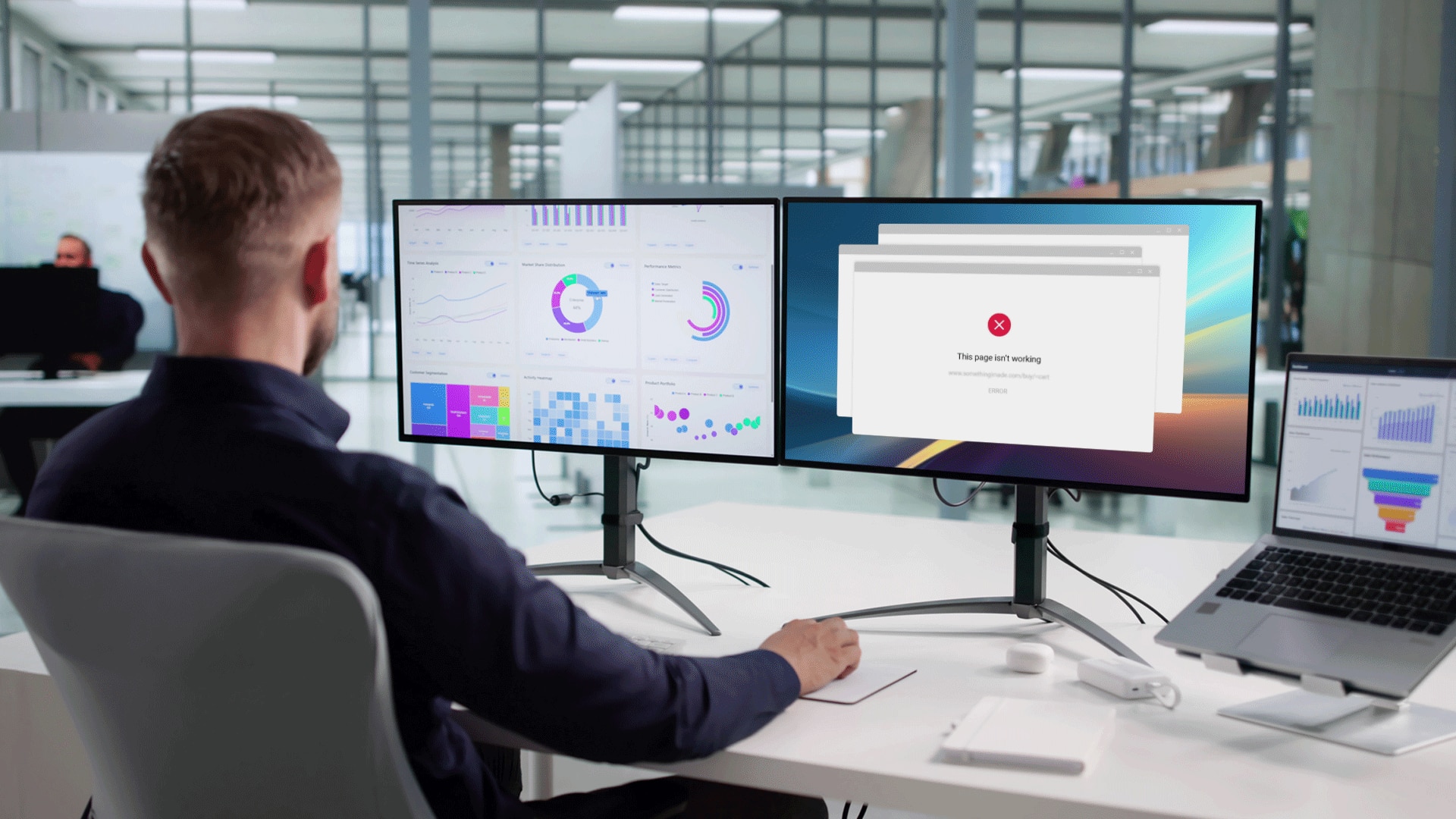

HTTP/1.x has a problem called “head-of-line blocking” where effectively only one request can be outstanding on a connection at a time. HTTP/1.1 tried to fix this with pipelining, but it didn’t completely address the problem (a large or slow response can still block others behind it). This forces clients to use a number of heuristics (often guessing) to determine what requests to put on which connection to the origin when.

Since it’s common for a page to load ten times or more the number of available connections, this can severely impact performance – often resulting in a waterfall of blocked requests. Multiplexing addresses these problems by allowing multiple request and response messages to be in flight at the same time. It’s even possible to intermingle parts of one message with another on the wire. This, in turn, allows a client to use just one connection per origin to load a page.

We’ve always had caching but we were never able to optimize for churn (the ratio of bytes in cache vs new we have to fetch when pushing an update) because small requests were too expensive. This is no longer the case.

HTTP/2 enables a number of new optimizations that applications can leverage, which were previously not possible, and it has already surpassed SPDY in adoption. Chrome will deprecate SPDY (and NPN) in early 2016.

New TLS + NPN/ALPN connections in Chrome (May 26, 2015 – Chrome telemetry):

~27% negotiate HTTP/1

~28% negotiate SPDY/3.1

~45% negotiate HTTP/2

You will not need to change your websites or applications to ensure they continue to work properly. HTTP/2 isn’t a ground-up rewrite of the protocol. HTTP methods, status codes and semantics are the same, and it should be possible to use the same APIs as HTTP/1.x (possibly with some small additions) to represent the protocol. All the core concepts (such as HTTP methods, status codes, URIs, and header fields) remain in place. Instead, HTTP/2 modifies how the data is formatted (framed) and transported between the client and server, both of whom manage the entire process, and hides all the complexity from applications within the new framing layer.

As a result, all existing applications can be delivered without modification. Your application code and HTTP APIs will continue to work uninterrupted. Your application will also likely perform better and consume fewer resources on both client and server. All frames (e.g. headers, data, etc.) are sent over single TCP connection and frame delivery is prioritized based on stream dependencies and weights. DATA frames are subject to per-stream and connection flow control.

Well-exercised technology

“9% of all Firefox (M36) HTTP transactions are happening over HTTP/2. There are actually more HTTP/2 connections made than SPDY ones. This is well exercised technology”, says Patrick McManus, Platform Software Engineer at Mozilla, and adds this about the huge win for overhead reduction:

“One great metric around that which I enjoy is the fraction of connections created that carry just a single HTTP transaction (and thus make that transaction bear all the overhead). For HTTP/1, 74% of our active connections carry just a single transaction – persistent connections just aren’t as helpful as we all want. But in HTTP/2 that number plummets to 25%.”

HTTP/3 and beyond

We haven’t started to see clients and servers trim implementations to really take advantage of all the powers this new protocol offers but the chances are very good that this will lead to faster page loads and to more responsive web sites.

Shortly put: a better web experience.

“To speed up the Internet at large, we should look for more ways to bring down RTT. What if we could reduce cross-atlantic RTTs from 150 ms to 100 ms? This would have a larger effect on the speed of the Internet than increasing a user’s bandwidth from 3.9 Mbps to 10 Mbps or even 1 Gbps”, said Mike Belshe, Co-founder and CEO at BitGo.

Ilya Grigorik, Developer Advocate at Google, is dreaming of a world with consistent 100ms rendering time on desktop and mobile. He’s helping to build better Internet infrastructure to deliver the dream and you can (and should) read more in his comprehensive free online book.

Right now, people are really keen to get HTTP/2 out the door so a few more advanced and experimental features have been left out – such as pushing TLS certificates and DNS entries to the client, both to improve performance. HTTP/3 might include these, if experiments go well. But for now, let’s celebrate the new protocol on the block.

You sure are welcome.