For decades, supercomputers have helped scientists perform calculations that wouldn’t have been possible on regular computers of that time. Not only has the construction of supercomputers helped push the envelope of what’s possible within the computing field, but the calculations performed by supercomputers helped further both science and technology, and ultimately our lives.

This post pays tribute to some of the most powerful supercomputers the world has ever seen.

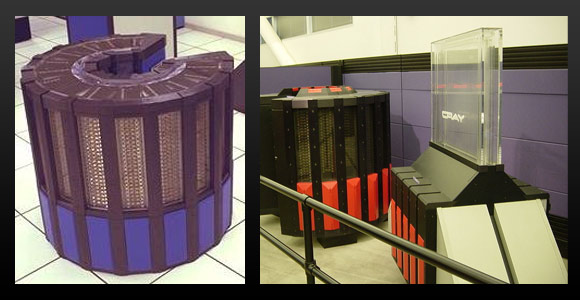

CRAY-1

The first Cray computer was developed by a team led by the legendary Seymor Cray. It was a freon-cooled 64-bit system running at 80MHz with 8.39 megabytes of RAM. Careful use of vector instructions could yield a peak performance of 250 megaflops. Together with its Freon cooling system, the first model of the Cray-1 (Cray-1A) weighed 5.5 tons and was delivered to the Los Alamos National Laboratory in 1976. A year later, the machine made its first international shipment to the European Centre for Medium-Range Weather Forecast, and within the next two years was introduced in Germany, Japan, and the U.K..

The famous C-shape was created to keep the cables as short as possible (the curve makes the distances on the inside shorter). Shorter cables allowed the system to operate at a higher frequency (80MHz was insanely fast at the time).

Above: The classic Cray computer C-shape in evidence, presumably there for performance, not to mimic the C in Cray.

CRAY-2

The Cray-2 was the world’s fastest computer between 1985 – 1989, capable of 1.9 gigaflops. Like the Cray-1, it was a 64-bit system. The first Cray-2 was delivered with 2GB of RAM, which was considered massive at the time. Its circuit boards were packed in eight tight, interconnected layers on top of each other, which made any form of air cooling impossible. Instead, a special electrically insulating liquid called Flourinert (from 3M, the company that brought you Post-it notes) was used to cool the circuits. Thanks to the heat exchanger water tank that came with the liquid cooling system, visible in the pictures below, the Cray-2 earned the nickname “Bubbles.”

Developed for the United States of Defense and Energy, it was used mainly for nuclear weapons research and oceanographic development.

Above: Note the cooling system’s heat exchanger water tank in the right picture. It earned the Cray-2 it’s “Bubbles” nickname.

The Connection Machine 5

The CM-5 was part of a family of supercomputers by Thinking Machines Corporation initially intended for artificial intelligence applications and symbolic processing, but found more success in the field of computational science. A famous CM-5 system was FROSTBURG, which was installed at the US National Security Agency (NSA) in 1991, operating until 1997. The FROSTBURG computer was initially delivered with 256 processing nodes, but was upgraded in 1993 to a total of 512 processing nodes and 2TB of RAM. It used SPARC RISC processors and had a peak performance of 65.5 gigaflops. It had cool-looking light panels that showed processing node usage and could also be used for diagnostics purposes. A CM-5 was also featured in the movie Jurassic Park (in the island control room). It was decommissioned in October 1996.

Click here to watch an animated short film rendered on a CM, called Liquid Selves.

Above: The CM-5 and it’s light panels in clear evidence. Cool enough to land it a role in Jurassic Park.

Fujitsu Numerical Wind Tunnel

Fujitsu developed the supercomputer known as the Numerical Wind Tunnel in cooperation with Japan’s National Aerospace Laboratory. As its name implies, it was used to simulate wind turbulence on aircraft and spacecraft, and to forecast weather. The system was capable of 124.5 gigaflops and was the fastest in the world when it debuted in 1993. It was the first computer to break the 100 gigaflops barrier. Each processor board was holding 256MB of central memory.

Later on, the system was upgraded to 167 processors, with a Linpack performance of 170 gigaflops, which allowed it to be a No. 1 system until December 1995.

Above: Used for among other things to calculate wind turbulence on aircraft, the Numerical Wind Tunnel looked quite aerodynamic itself.

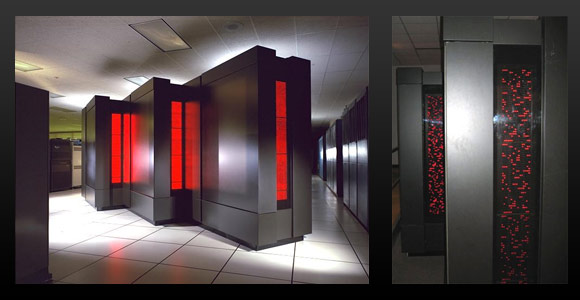

ASCI Red

The ASCI Red, a collaboration between Intel and Sandia Labs, was the world’s fastest computer between 1997-2000. The original ASCI Red used Pentium Pro processors clocked at 200 MHz, later upgraded to Pentium II Overdrive processors operating at 333 MHz. It was the first computer to break the teraflops barrier (and after the processor upgrade it passed 2 teraflops). The machine had 4,510 compute nodes (with close to 10,000 Pentium Pro processors), a total of 1,212GB of RAM, and 12.5TB of disk storage. The upgraded version of ASCI Red was housed in 104 cabinets, taking up 2,500 square feet (230 square meters). ASCI stands for Accelerated Strategic Computing Initiative, a U.S. government initiative to replace live nuclear testing with simulation.

From June 1997 to June 2000, ASCI RED was claimed to be the fastest machine available. It was decommissioned after 10 years of use, in 2006, after earning a reputation for reliability that’s never been beaten.

Above: The ASCI Red provided the computing power to simulate nuclear testing, doing away with live tests.

ASCI White

ASCI White took over as the world’s fastest supercomputer after ASCI Red and kept that position between 2000 – 2002. Although similar in name, the ASCI White was completely different from ASCI Red. Intel had constructed the ASCI Red, but the ASCI White was an IBM system, based on IBM’s RS/6000 SP computer system. It housed a total of 8,192 POWER3 processors operating at 375 MHz, 6TB of RAM and 160TB of disk storage. The ASCI White was capable of more than 12.3 teraflops.

Decommissioned in July 2006, it was outmatched by the Earth Simulator.

Above: Fish-eye view of the ASCI White.

The Earth Simulator

The Earth Simulator was built in 1997 by NEC for the Japan Aerospace Exploration Agency, the Japan Atomic Energy Research Institute, and the Japan Marina Science and Technology Center. It was the world’s fastest computer between 2002 – 2004. The Earth Simulator is used to run global climate simulations for both the atmosphere and the oceans. Based on NEC’s SX-6 architecture, it had a total of 5120 processors, 10TB of RAM and 700TB of disk space (plus 1.6PB of mass storage in tape drives). The Earth Simulator consisted of two nodes installed per 1 meter x 1.4 meter x 2 meter cabinet, while each of them was consuming 20kW of power. The initial Earth Simulator was capable of the theoretical top performance of 40 teraflops, though “only” 35.86 teraflops have been achieved. An upgraded version of the system is still in use.

Above: The Earth Simulator. The Japanese seem to name their supercomputers very clearly by their purpose (judging by this one and Fujitsu’s Numerical Wind Tunnel).

Blue Gene/L

IBM initially developed the Blue Gene family of supercomputers to simulate biochemical processes involving proteins. The Blue Gene/L at the Lawrence Livermore National Laboratory (LLNL) was the world’s fastest computer between November 2004 until 2008 when it lost its crown to another IBM project, the Roadrunner. In its configuration, the Blue Gene/L at LLNL has 131,072 IBM PowerPC processors running at 700 MHz, a total of 49.1TB of RAM, 1.89PB of disk space, and a theoretical peak performance of 367 teraflops. A more beefed-up version of the system previously achieved a peak performance of 596 teraflops.

For another very powerful Blue Gene computer, this time based on Blue Gene/P, check out the Jugene machine, which was once the fastest supercomputer in Europe.

Above: This is the Blue Gene/L supercomputer that took over the performance crown from the Earth Simulator.

Jaguar

Located at the Oak Ridge National Laboratory, this Cray supercomputer was capable of 1.64 petaflops. The Jaguar has gone through several iterations, and was using Cray’s XT4 and XT5 architecture with quad-core AMD Opteron processors. It has more than 180000 processing cores with a total of 362TB of RAM and 10 PB of disk space. The Jaguar occupied 284 cabinets, and the XT5 section alone (200 cabinets) is as large as an NBA basketball court. With a performance of just over 1 petaflop, it was the second-fastest computer in the world in 2008.

Above: The Jaguar supercomputer, 284 cabinets of hardware.

IBM Roadrunner

The IBM Roadrunner was designed to reach 1.7 petaflops and in 2008, became the world’s fastest computer. It was the first computer able to keep a sustained 1 petaflops performance. It had 12,960 IBM PowerXCell 8i processors operating at 3.2 GHz and 6,480 dual-core AMD Opteron processors operating at 1.8 GHz, resulting in a total of 130,464 processor cores. It also had more than 100TB of RAM. The Roadrunner supercomputer was housed in 296 racks and occupied 6,000 square feet (560 square meters) at the Los Alamos National Laboratory in New Mexico.

With the lifetime of just five years, IBM Roadrunner was shut down in March 2013 due to the low energy efficiency.

Above: The Roadrunner fills up almost 6,000 square feet. It’s funny how although computer components keep getting smaller over the years, supercomputers just keep getting larger.

And if you think these were impressive, just wait a couple of years. In 2019, two IBM-built machines share a first place on the Supercomputers list. Summit (capable of 200 petaflops) and Sierra (capable of 125 petaflops) are respectively installed at the Department of Energy’s Oak Ridge National Laboratory and Lawrence Livermore National Laboratory in California, and are used in diverse fields—climatology, cosmology, medicine, and predictive applications in stockpile stewardship.

If you enjoyed this look into the past, we recommend you check out our posts about the History of PC Hardware and Computer Data Storage.

Image sources:

Cray-1 1 and 2. Cray-2 1 and 2. CM-5 1 and 2. Numerical Wind Tunnel. ASCI Red 1 and 2. ASCI White. Earth Simulator 1 and 2. Blue Gene/L. Jaguar. Roadrunner 1 and 2.

Note: This article first appeared on this blog back in 2009, and we have updated up the content.