Change comes with risk. This is as true for a one-person IT department as it is for a cloud giant. And earlier this year, a Microsoft outage demonstrated how high-performing teams aren’t immune to change-induced service disruptions.

Less than two weeks after a Windows update unexpectedly deleted icons and shortcuts from users’ PCs, Microsoft experienced a different change-induced issue. This time it was related to their own infrastructure. Specifically, a WAN update took down multiple popular services belonging to Microsoft 365. The downtime lasted for several hours on January 25, 2023.

This article will unpack what happened during this outage and why. You’ll learn what you can do to reduce the risk and impact of outages in your environments.

Scope of the outage

The Microsoft outage—which is logged under Microsoft service incident number MO502273—impacted multiple services, including these popular applications:

- Teams

- Exchange Online

- Outlook Online

- SharePoint Online

- OneDrive for Business

- Power BI

- Microsoft Graph

- Microsoft Intune

- Microsoft Defender for Identity

Microsoft Store and Xbox Live apps that depend on Azure servers were also affected.

With Microsoft Teams alone serving over 280 million monthly users, it’s safe to say the outage had a widespread impact.

Overall, the incident lasted nearly seven and a half hours. However, the broadest impact occurred during a 90-minute window between the beginning of the incident and the rollback of the change. Here’s how the timeline came together:

- 07:31 UTC: The Microsoft 365 Status Twitter account tweets that they are investigating issues impacting multiple Microsoft 365 services.

- 08:15 UTC: A networking issue is identified as the likely cause of the outage.

- 09:26 UTC: Microsoft announces that a change suspected to have caused the outage has been rolled back.

- 14:31 UTC: Microsoft confirms that impacted services are recovered and stable.

Root cause analysis

As with many outages, some wondered if this one was the result of a cyberattack. Others questioned if the outage was related to the recent large-scale layoffs at the tech giant. In a statement given to BBC, Microsoft indicated that a cyberattack was not involved. Instead, it determined the root cause to be a WAN update. Rolling back the update resolved the issue.

WAN and BGP

Further analysis showed that the network issues caused by the updates were related to the rapid readvertising of border gateway protocol (BGP) router prefixes, leading to significant packet loss. Let’s take a step back to unpack BGP.

BGP is the routing protocol for internet traffic, facilitating communication between autonomous systems (ASes). An AS is a group of routable addresses with a routing policy that belongs to one organization. ASes use BGP—specifically external BGP (eBGP)—to advertise routing information with its peers, build routing tables, and make decisions about path selection. All of these are important processes that keep the internet running.

After the WAN update, multiple network prefixes that Microsoft ASes were advertising were withdrawn and readvertised several times. The cycle of advertisements impacted route selection on other ASes and led to a flood of traffic to systems that couldn’t keep up, resulting in large-scale packet loss.

Learning from the outage

Even if you don’t operate IT infrastructure at the same scale as Microsoft, you can learn plenty of good lessons from this incident. Here are our top three takeaways:

Takeaway #1: Expect complex systems to have issues.

IT Ops is hard. You’re dealing with complex dependency chains, dynamic workloads, and rapid changes. When dealing with complex systems, you should occasionally expect something to go wrong. The key is to plan for incidents, implementing contingencies that intelligently balance risk.

Microsoft’s CTO, Mark Russinovich, made this clear back in 2020 in a post that was aptly titled “Advancing the outage experience—automation, communication, and transparency”:

We will never be able to prevent all outages. In addition to Microsoft trying to prevent failures, when building reliable applications in the cloud your goal should be to minimize the effects of any single failing component.

Microsoft’s CTO, Mark Russinovich

While Russinovich focused on cloud infrastructure in his post, his fundamental idea holds true for most IT infrastructure.

Error budgets are a great way to balance planning for this complexity without overengineering a system or becoming too change-averse. Error budgets place a ceiling on how much failure or downtime can occur without violating agreements. You can’t predict every possible failure, but you can architect to stay within your error budgets and take corrective action if you don’t. This practice can help you strike a balance that makes business sense.

Takeaway #2: Test your updates.

Updates are a regular part of IT life, and patches are often necessary to keep your infrastructure secure and performant. However, updates also come with risks. Microsoft’s outage provides a textbook example of why testing before you roll out an upgrade is important.

Thorough testing of a patch before you deploy it to production is ideal, as it can help you identify major problems before they cause downtime. That’s why testing is a key part of many patching guidelines, frameworks, and best practices. However, you also need to weigh the tradeoff between the thoroughness of your testing and the time it takes for a patch to reach production. Even a National Institute of Standards and Technology (NIST) patching guide acknowledges the tradeoffs between early deployment and more testing.

A QA or staging environment that mirrors production is a great way to test an upgrade before release. Unfortunately, you don’t always have a test environment when you’re working in IT, especially if you’re patching hardware appliances or network infrastructure. In these cases, teams can reduce risk with the following:

- Incremental rollouts: If the upgrade applies to multiple sites, servers, or appliances, roll it out incrementally to a small subset. Verify the change works as expected, then proceed to the next batch. This limits the risk of an issue impacting your entire deployment.

- Performance monitoring:Monitor performance after an upgrade to detect anomalies before they become outright service disruptions. This also enables you to verify stability after recovery from an incident.

Takeaway #3: Have a rollback plan.

Microsoft was able to restore service by rolling back their update. Even with thorough testing in place, you will always have the potential need to roll back a change. If you only take one thing away from Microsoft’s outage, let it be this: Always have a rollback plan.

Ideally, your rollback plan should ensure that you can restore service after a failure while meeting your recovery time objective (RTO) and recovery point objective (RPO). If possible, aim to automate the process of rolling back an update. This will minimize the time it takes to recover. Additionally, for mission-critical infrastructure, you should thoroughly test your rollback plan to make sure it will work when you need it to.

How Pingdom® can help

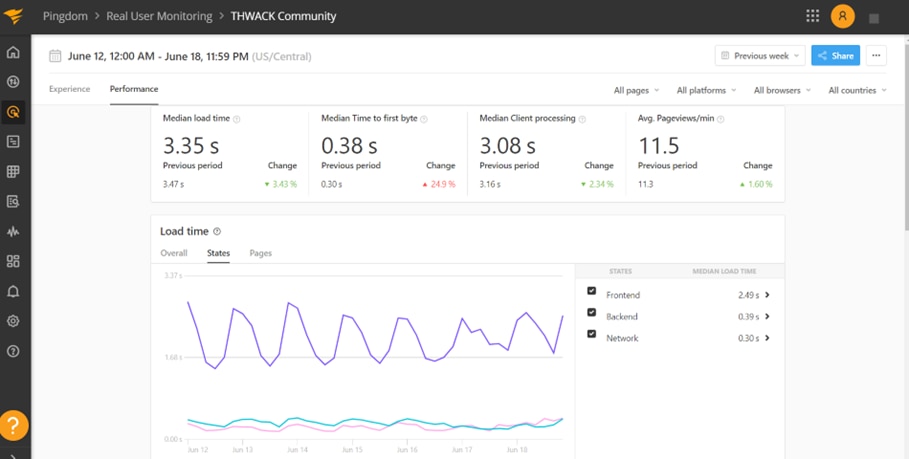

Because modern IT is a complex web of dependencies, a change that causes a service disruption might go unnoticed for much longer than you (or your users) can stomach. With multiple checks—including ping, HTTP, and DNS—from over 100 servers across the globe, Pingdom enables you to quickly detect and respond if something goes wrong in production.

Additionally, real user monitoring (RUM) helps teams understand application performance from an end user’s perspective. In the example of the Microsoft outage, Pingdom’s RUM capabilities could help a team identify who is impacted by an outage. You would be able to visually explore performance impacts across regions and adapt your remediation rollout.